Deep Dive: CDN Architecture – Delivering Content at Scale

Animated

——————Key Takeaways——————

What is a CDN?

A CDN (Content Delivery Network) is a distributed network of servers designed to cache and deliver content closer to users, improving speed, reducing latency, and enhancing availability.

Why is a CDN Essential?

✅ Performance Improvements – Reduces load times by caching content at edge locations.

✅ Load Distribution – Offloads traffic from origin servers to handle spikes efficiently.

✅ Reducing Latency – Optimizes routing with TCP reuse, keep-alive, and Anycast.

✅ Cost Optimization – Lowers bandwidth costs by reducing origin server requests.

How Does a CDN Work?

📌 Request Routing – Uses DNS resolution, Anycast, or geolocation to direct traffic.

📌 Content Caching – Stores static and dynamic content based on caching policies.

📌 Edge Server Interactions – Fetches content from the origin when necessary.

Key Components of a CDN

🌍 Edge Servers – Cache and serve content from Points of Presence (PoPs).

💾 Origin Servers – The main source of content, contacted only when needed.

🏢 Points of Presence (PoPs) – Global clusters of edge servers for redundancy.

🔄 Anycast Networking – Routes users to the nearest or least congested PoP.

CDN Caching Strategies

⏳ Time-to-Live (TTL) – Defines cache expiration time for content freshness.

🚀 Cache Invalidation – Removes outdated content via purging mechanisms.

🔄 Stale-While-Revalidate – Serves stale content while updating in the background.

CDN Load Balancing Techniques

🌎 Geo-Based Load Balancing – Routes users to the closest CDN PoP.

🛜 DNS-Based Load Balancing – Uses DNS responses for traffic distribution.

📡 Anycast Routing – Directs traffic to the nearest available CDN node.

Security Features in CDNs

🛡️ DDoS Protection – Mitigates large-scale attacks with traffic filtering.

🕵️ Web Application Firewall (WAF) – Blocks SQL injection & XSS attacks.

🤖 Bot Mitigation – Identifies and blocks harmful automated traffic.

Multi-CDN Architectures

🔗 Benefits of Multi-CDN – Improves redundancy and optimizes performance.

🚨 Failover Mechanisms – Uses health checks to reroute traffic on failures.

🔀 Traffic Steering Strategies – Adjusts routing based on latency and cost.

Challenges & Best Practices

⚠️ Cache Inconsistencies – Use purging and stale-while-revalidate for consistency.

⚠️ Latency Variability – Optimize routing and use edge computing.

⚠️ Cost Considerations – Monitor request-based pricing and PoP utilization.

✅ Optimize cache hit ratios with proper TTL settings.

✅ Implement smart routing for better performance.

✅ Monitor traffic patterns, cache efficiency, and security threats.

Real-World Use Cases

📺 Streaming Platforms (Netflix, YouTube) – Reduces buffering & optimizes delivery.

🛒 E-commerce (Amazon, Shopify) – Speeds up page loads & handles traffic surges.

📡 API Acceleration (Google, Facebook) – Caches API responses to lower latency.

Interview Questions Covered

🔹 How does a CDN improve performance?

🔹 What are the trade-offs between different caching strategies?

🔹 How does Anycast routing benefit a CDN?

🔹 How would you design a global CDN system?

🚀 Mastering CDN architectures is essential for building scalable, high-performance systems!

——————DEEP DIVE——————

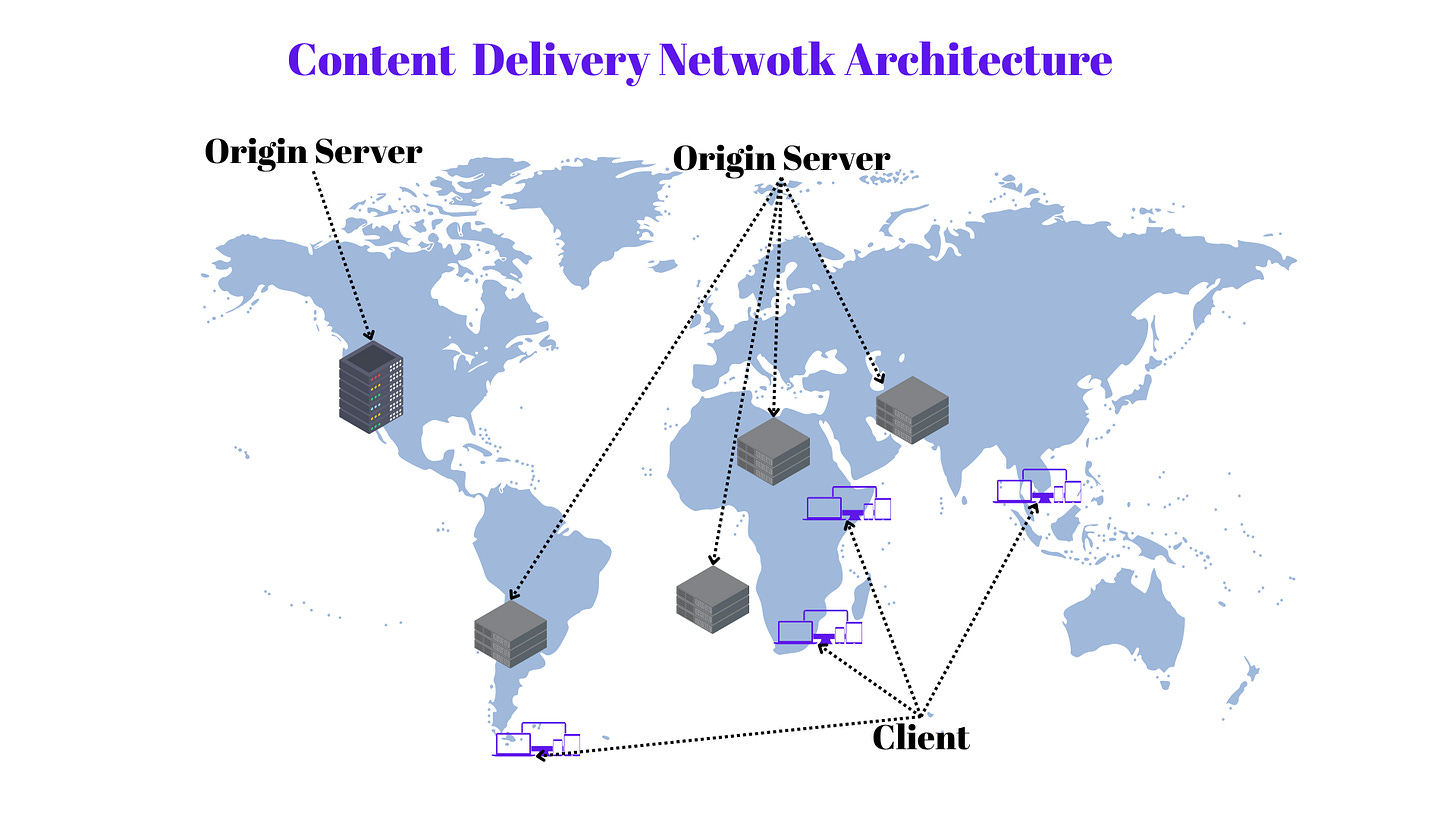

1. What is a CDN?

A Content Delivery Network (CDN) is a distributed network of servers strategically placed across different geographical locations to deliver content efficiently. It improves load times, reduces latency, and enhances availability by caching content closer to end users. CDNs serve a variety of content, including static assets (images, CSS, JavaScript), dynamic content, videos, and APIs.

2. Why is a CDN Essential?

a. Performance Improvements

CDNs reduce the physical distance between users and servers by caching content at edge locations. This minimizes round-trip time (RTT) and improves page load speeds, which directly impacts user experience and conversion rates.

b. Load Distribution

By offloading traffic from origin servers, CDNs prevent bottlenecks and reduce infrastructure costs. This is crucial for handling traffic spikes, such as during Black Friday sales or live streaming events.

c. Reducing Latency

Latency is the delay before data transfer begins. CDNs use optimizations like TCP connection reuse, keep-alive, and prefetching to lower latency. Additionally, they leverage Anycast networking to direct requests to the nearest available edge server.

d. Cost Optimization

Instead of relying solely on expensive origin infrastructure, businesses use CDNs to reduce bandwidth costs. CDNs negotiate peering agreements with ISPs, optimizing traffic routing and lowering data transfer expenses.

3. How Does a CDN Work?

a. Request Routing

When a user requests content, the CDN determines the optimal edge server using DNS resolution, Anycast routing, or IP geolocation. The request is then directed to the closest server with the cached content.

b. Content Caching

CDNs cache content based on caching policies such as Time-to-Live (TTL) headers, cache purging, and revalidation mechanisms. Popular strategies include:

Static Content Caching: Storing assets like images, fonts, and scripts.

Dynamic Content Caching: Partial caching of API responses and HTML pages.

Edge Side Includes (ESI): Assembling pages dynamically at the edge.

c. Edge Server Interactions

If the requested content is not cached, the edge server retrieves it from the origin, caches it, and serves it to the user. Subsequent requests are fulfilled from the cache until the content expires or is invalidated.

4. Key Components of a CDN

a. Edge Servers

Edge servers are strategically placed in Points of Presence (PoPs) to cache and serve content closer to users. They play a crucial role in reducing origin load and improving response times.

b. Origin Servers

The origin server is the primary content source. CDNs fetch content from origin servers when necessary, reducing direct exposure to end-user traffic.

c. Points of Presence (PoPs)

PoPs are clusters of edge servers distributed globally. Each PoP enhances availability by handling local user requests, ensuring redundancy and failover capabilities.

d. Anycast Networking

Anycast allows multiple edge servers to share the same IP address, directing traffic to the closest or least congested server. This improves fault tolerance and performance.

5. CDN Caching Strategies

a. Time-to-Live (TTL)

TTL defines how long content remains cached before expiration. Short TTL values ensure freshness, while long TTL values maximize cache hit ratios.

b. Cache Invalidation

When content updates are required, CDNs support purging mechanisms to remove outdated assets. Purging can be manual, automatic, or event-driven.

c. Stale-While-Revalidate

This strategy serves stale cached content while fetching updated content in the background. It provides a seamless user experience during cache refreshes.

6. CDN Load Balancing Techniques

a. Geo-Based Load Balancing

Requests are routed based on the user’s geographical location, ensuring low-latency access and compliance with data residency laws.

b. DNS-Based Load Balancing

CDNs leverage DNS responses to distribute traffic among multiple PoPs, dynamically directing users to the best-performing edge server.

c. Anycast Routing

With Anycast, the same IP address is advertised from multiple locations, automatically routing traffic to the nearest available CDN node.

7. Security Features in CDNs

a. DDoS Protection

CDNs absorb large-scale attacks using rate limiting, traffic scrubbing, and blackholing mechanisms to prevent service disruptions.

b. Web Application Firewall (WAF)

WAFs filter malicious traffic, blocking SQL injection, cross-site scripting (XSS), and other cyber threats at the edge before they reach the origin.

c. Bot Mitigation

By analyzing traffic patterns, CDNs differentiate between legitimate users and bots, mitigating credential stuffing and web scraping attacks.

8. Multi-CDN Architectures

a. Benefits of Multi-CDN

Multi-CDN setups improve redundancy, reduce dependency on a single provider, and optimize performance by leveraging multiple networks.

b. Failover Mechanisms

CDNs use real-time health checks and failover mechanisms to reroute traffic during outages, ensuring high availability.

c. Traffic Steering Strategies

Advanced traffic steering dynamically shifts load based on latency, cost, and server health, optimizing user experience and cost efficiency.

9. Challenges in CDN Deployments

a. Cache Inconsistencies

Ensuring cache consistency across global PoPs is challenging. Techniques like cache purging and stale-while-revalidate help mitigate this.

b. Latency Variability

Network congestion and peering agreements impact latency. Optimized routing and edge computing improve consistency.

c. Cost Considerations

While CDNs reduce bandwidth costs, pricing models based on requests, egress traffic, and PoP utilization require careful cost management.

10. Real-World Use Cases of CDNs

a. Streaming Platforms (Netflix, YouTube)

CDNs handle massive video delivery workloads, reducing buffering and ensuring smooth playback at scale.

b. E-commerce (Amazon, Shopify)

Retailers use CDNs to accelerate page loads, reduce cart abandonment, and handle high traffic surges.

c. API Acceleration (Google, Facebook)

CDNs cache API responses and optimize REST & GraphQL calls, reducing latency for mobile and web applications.

11. Best Practices for Using a CDN

Optimize cache hit ratios with proper TTL settings.

Use smart routing algorithms to enhance performance.

Monitor traffic patterns, cache efficiency, and security threats.

12. Interview Questions Covered

How does a CDN improve performance?

What are the trade-offs between different caching strategies?

How does Anycast routing benefit a CDN?

How would you design a global CDN system?

🚀 Mastering CDN architectures is essential for building scalable, high-performance systems!